Open Source ETL Tools: Comparison Guide 2026

Choosing the right ETL tool can save your team hours and streamline your data workflows. This guide compares six open-source ETL tools: Airbyte, Apache Airflow, Apache NiFi, Talend Open Studio, Pentaho Data Integration (PDI), and Meltano. Each tool offers unique features, from real-time processing to code-first flexibility, making it easier to handle growing data volumes projected to reach 181 zettabytes by 2025.

Key takeaways:

- Airbyte: Over 600 connectors, AI-powered builder, great for diverse data sources.

- Apache Airflow: Python-based orchestration for complex workflows.

- Apache NiFi: Real-time dataflows with visual design and strong security.

- Talend Open Studio: Retired as of January 31, 2026; migration needed.

- Pentaho PDI: Visual interface, big data support, but older tech.

- Meltano: Developer-friendly, GitOps-focused, with strong customization.

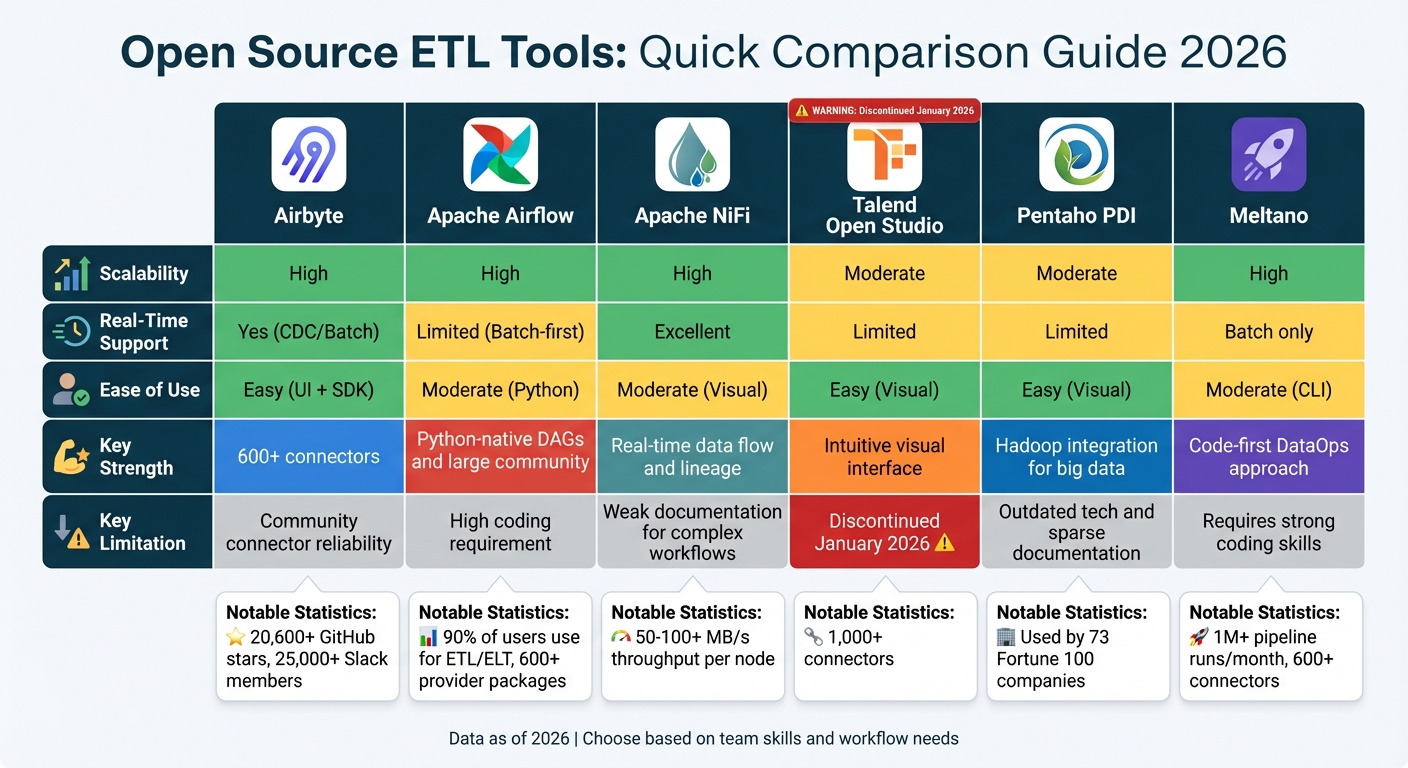

Quick Comparison:

| Tool | Scalability | Real-Time Support | Ease of Use | Key Feature | Limitation |

|---|---|---|---|---|---|

| Airbyte | High | Yes (CDC/Batch) | Easy | 600+ connectors | Community-built connectors vary |

| Apache Airflow | High | Limited (Batch) | Moderate | Python-native DAGs | Requires coding expertise |

| Apache NiFi | High | Excellent | Moderate | Real-time data lineage | Documentation gaps |

| Talend Open Studio | Moderate | Limited | Easy | Intuitive visual interface | Discontinued in 2026 |

| Pentaho PDI | Moderate | Limited | Easy | Hadoop integration | Outdated design |

| Meltano | High | Batch only | Moderate | Code-first approach | Requires strong coding skills |

The choice depends on your team's skills and workflow needs. Keep reading for a detailed breakdown of each tool's capabilities, performance, and suitability.

Open Source ETL Tools Comparison: Features, Scalability, and Use Cases

🌐 Open Source ETL: Free & Powerful Tools for Data Integration 🚀

1. Airbyte

Airbyte stands out as a leading open-source ETL platform, offering over 600 pre-built connectors. These connectors cover a wide range of sources, from databases and SaaS platforms to specialized APIs, giving data teams the ability to link nearly any data source without having to create custom integrations from the ground up.

Capabilities

Airbyte’s AI-powered Connector Builder leverages large language models to create connectors directly from API documentation in just minutes, cutting maintenance time by as much as 60%. For teams working with proprietary or less common APIs, the low-code Connector Development Kit (CDK) provides a Python-based framework to streamline the development process.

Designed for ELT workflows, Airbyte integrates seamlessly with dbt for post-load transformations, supports GenAI by connecting to vector database destinations like Pinecone, Milvus, and Weaviate, and uses log-based Change Data Capture (CDC) for near real-time syncing with PostgreSQL, MySQL, and SQL Server. These capabilities make it a powerful tool for teams looking to scale efficiently.

Performance

Airbyte’s Kubernetes-native architecture ensures it can scale horizontally to handle workloads at the petabyte level. The platform supports parallel syncs and optimizes resource allocation, making it possible to process multiple data streams simultaneously. Its "workloads" architecture separates orchestration and scheduling from data movement, which improves reliability during periods of high demand. Additionally, resumable full refreshes allow large data streams to pick up where they left off after temporary disruptions, avoiding the need to start over.

Suitability

Airbyte is an excellent choice for teams needing to connect to a wide array of data sources, especially when data sovereignty is a priority. Its no-code builder is particularly useful for working with niche or proprietary APIs. For industries like healthcare and finance that require strict control over data, Airbyte’s self-hosted option provides the necessary flexibility. However, community-maintained connectors might not be as dependable as those managed by Airbyte's core team, and self-hosted deployments demand significant technical expertise for upkeep.

Community Support

Airbyte boasts a vibrant community with over 20,600 GitHub stars, 5,000 forks, and 1,140 contributors. Its Slack community includes more than 25,000 members, showcasing active engagement and support. Data Analytics Expert Jim Kutz highlights the platform’s adaptability:

"Airbyte's open-source foundation and connector ecosystem future-proof your stack against vendor lock-in and evolving needs".

2. Apache Airflow

Apache Airflow has become a go-to tool for orchestrating data pipelines, with 90% of users leveraging it specifically for ETL/ELT workflows. Unlike tools that focus purely on moving data, Airflow shines in orchestration - managing when and how tasks run across your infrastructure. It ensures tasks are executed in a well-coordinated manner.

Capabilities

Airflow uses a "workflows-as-code" approach, allowing you to define entire pipelines in Python. With over 600 provider packages, it integrates seamlessly with major cloud platforms and essential data tools. Its scheduling capabilities make it easy to set up recurring pipelines, backfill historical data, and retry failed tasks.

Recent updates have introduced a Task SDK, which simplifies development by decoupling DAG (Directed Acyclic Graph) authoring from internal changes. This results in a more stable, Python-friendly interface. Another useful feature is Datasets, which can automatically trigger workflows when tasks update them. Thanks to these features, Airflow is well-suited for handling heavy workloads with ease.

Performance

Airflow's modular design and use of message queues allow it to scale effectively, even for massive workloads. For example, Lyft uses Airflow to manage 9 trillion events and 750,000 data pipelines every month. Designed as a distributed system, it schedules tasks in parallel, making it ideal for batch-oriented workflows. However, it’s not optimized for continuous, event-driven, or streaming workloads. To maximize performance, heavy processing tasks should be offloaded to external compute engines like Spark or Snowflake.

Suitability

Airflow is a great fit for teams that prefer coding their pipelines, especially if those pipelines have complex dependencies or require detailed scheduling and retry controls. It often complements tools like Apache Kafka - while Kafka handles real-time data ingestion, Airflow processes the stored data on a scheduled basis. As Constantine Slisenka from Lyft Engineering explained, their goal when using complementary tools "was not to replace Airflow but to complement the company's tooling ecosystem".

Airflow is free to use under the Apache License, but managed services like Astronomer offer pay-as-you-go options starting at $0.35 per hour. Its combination of flexibility, scalability, and strong community backing makes it a standout option in the open-source ETL world.

Community Support

One of Airflow’s biggest strengths is its active and engaged community. With global Slack channels, mailing lists, and a wealth of learning resources like books and conferences, users have access to extensive support. Its widespread adoption means there’s no shortage of tutorials, troubleshooting guides, and practical examples to help teams succeed.

3. Apache NiFi

Apache NiFi, a project under the Apache Foundation initially developed by the NSA, simplifies and automates dataflows. With its drag-and-drop interface, users can design and monitor pipelines in real time. Let’s delve into NiFi’s features, performance, and practical applications.

Capabilities

One standout feature of NiFi is its built-in data provenance, which tracks the complete lineage of every piece of data from start to finish. This level of traceability is crucial for industries with strict compliance requirements, ensuring every step in data processing can be verified. To handle data surges, NiFi includes back-pressure controls that prevent downstream systems from becoming overwhelmed. Additionally, its persistent write-ahead log ensures reliable data delivery, even in the event of node failures.

Security is another strong point. NiFi supports two-way SSL and encrypted content, allowing teams to manage their own flows securely within shared clusters. For edge computing, NiFi offers MiNiFi, a lightweight version designed for IoT devices. MiNiFi gathers data directly at the source and sends it to a central cluster for processing.

Performance

NiFi’s performance is solid, with a single node typically reading and writing data at around 50 MB per second on standard disks. With proper configuration, throughput can exceed 100 MB per second. Its "Zero-Leader" clustering model ensures scalability by enabling all nodes to perform the same tasks on different data sets, allowing throughput to grow linearly as new nodes are added. However, achieving optimal performance often requires fine-tuning JVM settings to maximize I/O efficiency.

Suitability

NiFi shines in real-time streaming scenarios like IoT data collection, log ingestion, and event-driven alert systems - situations where low latency is essential. It’s particularly effective in hybrid and multi-cloud setups, using its Site-to-Site protocol to bridge on-premises systems with cloud platforms. The user-friendly visual interface makes it appealing for teams that prefer creating pipelines without writing extensive code.

That said, NiFi does come with challenges. Managing clusters, handling manual scaling, and maintaining JVM configurations require ongoing operational effort. Teams that rely heavily on pre-built SaaS connectors or need advanced SQL-based transformations might find other tools more efficient. While NiFi is free under the Apache License 2.0, organizations should factor in the costs of infrastructure and the engineering time needed for setup and maintenance.

Community Support

NiFi enjoys active development and a large user base, with thousands of organizations leveraging it for tasks like cybersecurity, observability, and generative AI data pipelines. The Apache Foundation offers extensive documentation, mailing lists, and community forums for support. For enterprises needing continuous assistance, commercial support options and tools like Data Flow Manager are available.

4. Talend Open Studio

Important note: Talend Open Studio officially retired on January 31, 2026, and is no longer supported by Qlik. After serving the data community for two decades, teams now need to plan their migration strategies. Below, we outline its key features, performance, suitability, and the state of its community support.

Capabilities

Talend Open Studio offered a user-friendly drag-and-drop interface with access to over 1,000 connectors and components. It was particularly effective at managing complex data formats like JSON, XML, and B2B standards (such as HL7 and EDI), thanks to its visual mapping tools. The metadata injection feature allowed teams to create reusable pipeline templates, simplifying tasks like data warehousing. Additionally, the platform prioritized data governance with features like built-in quality checks, metadata management, and role-based access controls.

Performance

Widely regarded as one of the top open-source tools for big data integration, Talend Open Studio supported parallel processing, enabling it to handle large datasets efficiently. It also integrated seamlessly with big data technologies like Hadoop and utilized cloud-native features to boost performance when working with cloud resources. However, achieving optimal performance for large datasets often required manual tuning and a solid understanding of the tool's technical intricacies.

Suitability

Talend Open Studio was a strong fit for organizations needing advanced transformations and comprehensive data governance. With over 1,000 connectors - outpacing competitors like Airbyte (600+) and Apache NiFi (300+) - it provided unmatched connectivity options. However, its steep learning curve often slowed productivity, even for seasoned developers. The open-source version also lacked built-in scheduling and orchestration, making manual setup or third-party tools necessary. As support ends, organizations must transition to actively maintained solutions to ensure security and compatibility.

Community Support

Over the years, Talend Open Studio thrived with the backing of an active community and extensive documentation. After Qlik's acquisition, the platform earned recognition as a leader in the Gartner Magic Quadrant for Data Integration Tools for ten consecutive years. However, with the retirement of the open-source version, users will no longer receive bug fixes, security updates, or official support. Teams relying on it must now allocate internal resources for maintenance and troubleshooting, which could lead to growing technical debt as the tool becomes outdated and incompatible with modern data stacks.

sbb-itb-61a6e59

5. Pentaho Data Integration (PDI)

Pentaho Data Integration (PDI), also known as Kettle, is trusted by 73 of the Fortune 100 companies. This long-standing open-source ETL tool offers a user-friendly drag-and-drop interface called Spoon, enabling teams to build data pipelines without needing to write code. With over 200 connectors, PDI integrates seamlessly with databases, SaaS applications, IoT devices, and big data platforms, making it a flexible option for hybrid cloud setups across AWS, Azure, and GCP.

Capabilities

PDI shines with its visual workflow designer and metadata injection feature, which allows teams to create reusable transformation templates. It supports AI and ML workflows, integrating models built with tools like Spark, R, Python, Scala, or Weka. Additionally, a GenAI plugin suite enhances functionality with LLM connectivity and automated parsing. The tool comprises four main components: Spoon for design, Pan for executing transformations, Kitchen for job execution, and Carte for remote or clustered execution.

The platform has proven its efficiency in real-world use cases. For instance, VNG Handel & Vertrieb cut storage costs by 91%, while data scientists saved up to 55% of the time they previously spent on data discovery and evaluation. These features make PDI a strong choice for organizations looking to streamline operations and improve scalability.

Performance

PDI supports both horizontal and vertical scaling, with compatibility for Docker and Kubernetes deployments. Its native integration with Hadoop and Spark allows it to handle massive data volumes in distributed environments. A notable example of its speed is Marketo, where Business Intelligence Engineer Simon Lee used PDI to deliver a feature-packed product in just eight weeks.

However, the graphical interface can slow down during complex tasks. Unlike modern cloud-native tools, PDI requires manual setup, which may pose a challenge for some teams. While it excels in batch ETL processes, its native real-time streaming and Change Data Capture (CDC) capabilities are limited and often depend on plugins or community extensions. Despite these challenges, organizations have reported up to 80% savings in data operations costs with PDI, although achieving such results requires careful optimization.

Suitability

PDI is ideal for organizations needing granular control over ETL processes and possessing the technical expertise to manage infrastructure. The free Community Edition provides core features but lacks official support, while the Enterprise Edition offers advanced options like ETL clustering, High Availability (HA), and metadata-driven lineage. Juan Carlos Garcia, Leader of the Business Intelligence Team at EULEN, highlighted its impact: "With Pentaho, we have greatly improved EULEN's time-to-insight, with users now able to access the data they need to track key business metrics near-instantly".

PDI also supports hybrid deployments, offering flexibility for both on-premises and cloud configurations. This adaptability makes it a solid choice for organizations balancing traditional and cloud-based strategies.

Community Support

PDI holds a 4.3/5 rating on G2 and 4.1/5 on Gartner Peer Insights from 137 reviews. Resources like Pentaho Academy, official documentation, technical blogs, and webinars provide structured learning opportunities. Developers often turn to Stack Overflow for assistance, though the community is smaller compared to those around Microsoft or Oracle products.

Jude Vanniasinghe, Senior Manager of Business Intelligence at Bell Canada, praised it as "a great tool that's evolved to meet the challenges of real people". While the free Developer Edition relies on community forums for support, Enterprise Edition users benefit from 24/7 premium phone and email assistance. For teams using the free version, implementation support is limited, requiring them to manage deployments independently unless they opt for a paid license.

6. Meltano

Meltano stands out among open-source ETL tools by prioritizing developer control and adaptability, making it a strong choice for modern data integrations. It treats data pipelines as code, incorporating version control, CI/CD testing, and separate environments for development, staging, and production. This command-line-first tool supports over 1 million pipeline runs every month and relies on the Singer specification to offer access to more than 600 connectors via the Meltano Hub. Users have reported transferring over 1TB of data daily through the platform.

Capabilities

Meltano acts as a control center for the modern data stack, integrating seamlessly with tools like dbt for transformations, Apache Airflow for orchestration, and Great Expectations for data quality testing. Its modular framework, built around Singer "taps" (extractors) and "targets" (loaders), allows teams to customize their workflows. Inline data mapping features enable filtering and anonymizing sensitive data, ensuring GDPR compliance.

The Meltano SDK simplifies the process of creating custom connectors for niche or internal APIs, reducing development time from days to just hours. As Matt Menzenski, Senior Software Engineering Manager at PayIt, shared:

"In two focused days with Meltano I basically reproduced what had taken us months to do in-house previously".

Meltano is pip-installable, cloud-agnostic, and deployable across various infrastructures.

Performance

Meltano excels in incremental syncing, with performance largely influenced by plugin configurations. It supports log-based Change Data Capture (CDC) for databases and uses specialized state backends to track sync progress. Quinn Batten, Senior Analytics Engineer, highlighted its impact:

"Meltano's been rock-solid since pre-1.0... It saves us at least $1M/yr and makes my job easy".

While the tool shines in batch processing, high-throughput scenarios may require manual adjustments, and it is not designed for real-time streaming. These factors make it a reliable option for teams focused on batch data workflows.

Suitability

Meltano is particularly effective for teams adopting GitOps practices, as it supports pull-request-based reviews for pipeline changes. It’s also a good fit for organizations with unique tech stacks that demand heavily customized ELT workflows. The platform is free under the MIT License for self-hosted setups, though a managed SaaS version is available for those who prefer avoiding infrastructure management. However, its developer-first approach requires a strong background in coding and data engineering. Martin Morset, a data professional, shared his experience:

"I love Meltano because it's so pleasant to use with its DevOps and Everything-as-Code style. It is easy to set up, flexible, and integrates with pretty much any orchestrator as well as dbt".

Community Support

Meltano has a 4.5/5 rating on G2 and an engaged Slack community with over 5,500 members. Its acquisition by Matatika aims to expand managed service offerings and shape its future. While its community is smaller compared to Airbyte’s, users consistently appreciate the high level of control it offers and its software development-centric approach to data engineering.

Advantages and Disadvantages

Here's a rundown of the main strengths and weaknesses of each tool, designed to help you quickly identify which one aligns best with your data integration needs. Knowing where each tool excels - and where it falls short - can make all the difference in choosing the right fit.

Airbyte stands out for its massive library of over 600 connectors and an intuitive interface. However, some community-built connectors may not meet enterprise-grade reliability standards.

Apache Airflow is ideal for handling complex workflows with its Python-native Directed Acyclic Graphs (DAGs) and a large global community. That said, it requires significant coding expertise and isn't built for real-time data processing.

Apache NiFi offers strong visual tools for real-time data routing and excellent data lineage tracking. On the downside, its documentation for complex workflows leaves room for improvement.

Talend Open Studio was known for its easy-to-use visual interface, but its open-source version will no longer be available after January 31, 2026.

Pentaho Data Integration (PDI) delivers solid big data integration capabilities, including Hadoop support. However, its technology feels outdated, and documentation is sparse.

Meltano is tailored for code-first DataOps teams, offering flexibility for developers. But it demands advanced coding skills, which may be a barrier for some users.

The table below summarizes these insights for quick comparison:

| Tool | Scalability | Real-Time Support | Ease of Use | Key Strength | Key Weakness |

|---|---|---|---|---|---|

| Airbyte | High | Yes (CDC/Batch) | Easy (UI + SDK) | 600+ connectors | Community connector reliability |

| Apache Airflow | High | Limited (Batch-first) | Moderate (Python) | Python-native DAGs and large community | High coding requirement |

| Apache NiFi | High | Excellent | Moderate (Visual) | Real-time data flow and lineage | Weak documentation for complex workflows |

| Talend Open Studio | Moderate | Limited | Easy (Visual) | Intuitive visual interface | Discontinued January 2026 |

| Pentaho PDI | Moderate | Limited | Easy (Visual) | Hadoop integration for big data | Outdated tech and sparse documentation |

| Meltano | High | Batch only | Moderate (CLI) | Code-first DataOps approach | Requires strong coding skills |

Conclusion

Picking the right ETL tool depends heavily on your specific needs and strengths. For real-time streaming and ultra-low latency, Apache NiFi stands out with its ability to deliver sub-second performance - something batch-focused tools can't achieve. If your team requires Python-native flexibility to manage complex, interdependent workflows, Apache Airflow is a dependable option, though it demands a higher level of coding expertise. On the other hand, Meltano is ideal for developer-driven environments, offering modular architecture and features tailored for CI/CD workflows and GitOps.

The availability of connectors is another critical factor. For instance, Airbyte's library of over 600 pre-built connectors can save your team significant time on custom development. If a visual, drag-and-drop design interface suits your team better, Pentaho Data Integration offers a user-friendly experience without requiring heavy coding knowledge. Ultimately, selecting a tool that aligns with your team's skills and workflows can make a noticeable difference in productivity.

Operational costs and modern infrastructure are also key considerations. Open-source tools may appear cost-effective but come with hosting, maintenance, and security responsibilities. As cloud-native architectures become more prominent, newer tools like Airbyte and Meltano are built to take advantage of containerization and cloud data warehouses, potentially lowering operational overhead compared to older, legacy solutions.

If you're looking to turn these insights into actionable skills, consider exploring DataExpert.io Academy. They offer specialized boot camps on topics like Apache Airflow, real-time data pipelines, and advanced ETL architecture. For hands-on training with platforms like Databricks, Snowflake, and AWS, their programs provide a practical way to deepen your expertise. Learn more at DataExpert.io.

FAQs

What should I consider when selecting an open-source ETL tool?

When choosing an open-source ETL tool, it's important to focus on a few critical aspects to ensure it aligns with your data integration requirements. Begin by looking into the features and capabilities the tool offers. Does it support core ETL tasks like extraction, transformation, and loading? Consider its performance and scalability as well. Some tools are optimized for real-time data streaming, while others are better for batch processing or handling SQL-based transformations.

You’ll also want to evaluate the tool's ease of use and the strength of its community support. Open-source tools with active communities often provide a wealth of resources, from plugins to troubleshooting advice, which can be incredibly helpful. Tools with user-friendly interfaces or pre-built connectors can make it much easier to set up and maintain your data pipelines.

Lastly, think about compatibility with your existing infrastructure, whether it's cloud-based, on-premises, or a hybrid setup. Make sure the tool can handle not just your current data volumes but also scale with your future needs. By carefully considering these factors, you'll be better equipped to select an ETL tool that fits your organization's specific goals and requirements.

What does the discontinuation of Talend Open Studio mean for its users?

The end of Talend Open Studio means users will now need to look for other open-source ETL tools to manage their data integration tasks. For those who depended on Talend Open Studio to handle complex, large-scale data workflows, this change could feel like a big adjustment.

To make the switch smoother, it's important to assess alternatives based on your unique needs. Consider factors like how well the tool can scale, how user-friendly it is, and whether it works seamlessly with your current systems.

What makes Meltano a great choice for developer-focused environments?

Meltano is an open-source ETL platform built to offer developers the flexibility and control they need. Its command-line interface (CLI)-focused design makes configuring and managing data pipelines straightforward, allowing teams to build and refine workflows efficiently. Plus, developers can tweak and expand the platform as needed, with no dependency on a specific vendor.

The platform works effortlessly with tools like Singer connectors, dbt, and orchestration systems, giving teams the ability to craft collaborative and customized data workflows. Backed by a vibrant community, Meltano is a solid choice for projects where developers want full control and adaptability.