How to Build Scalable Data Quality Frameworks

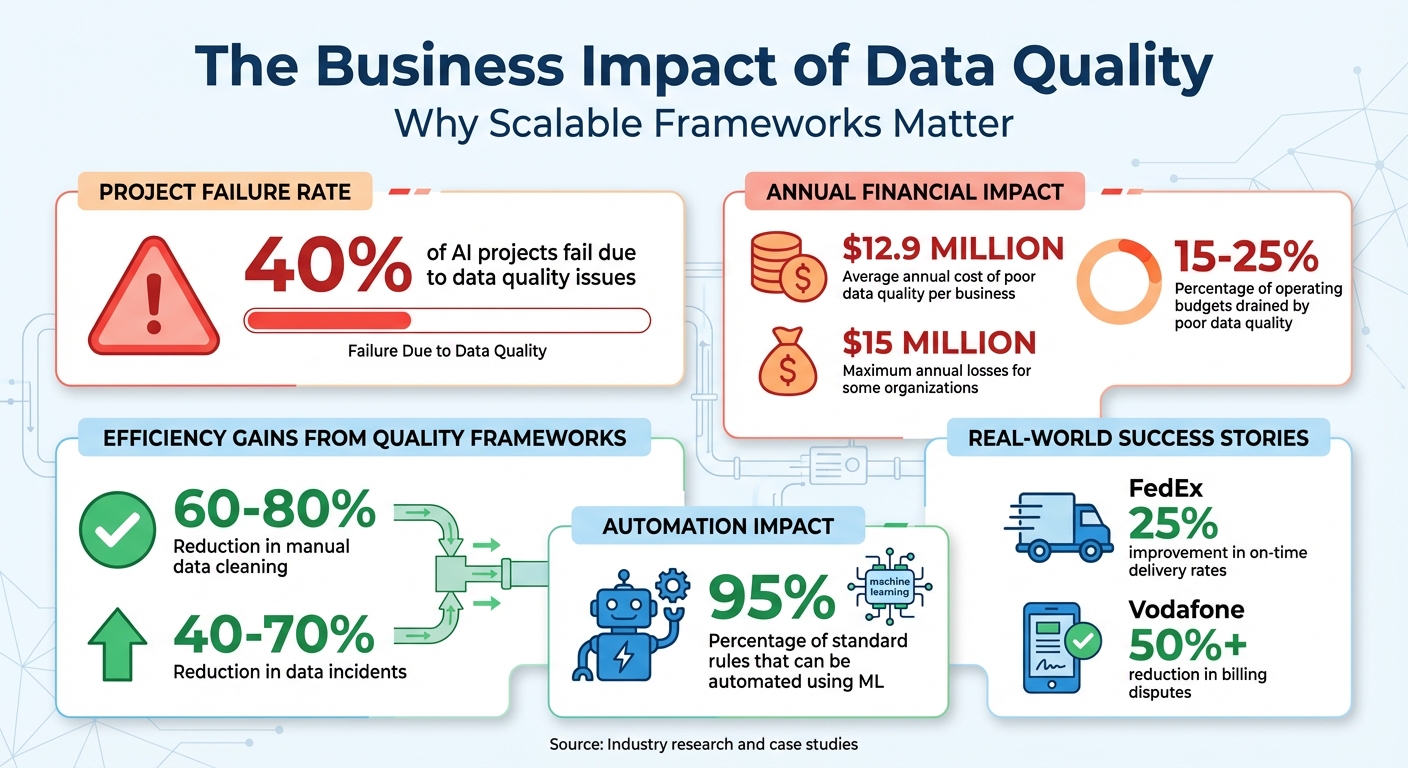

Data quality issues cost companies millions annually and derail nearly 40% of AI projects. A scalable data quality framework ensures reliable, high-quality data for decision-making, compliance, and operational efficiency. Here's how to approach it:

- Understand Business Needs: Identify critical data and prioritize dimensions like accuracy, completeness, and timeliness based on their impact.

- Automate Processes: Use top data engineering boot camp tools for real-time validation, anomaly detection, and automated checks to replace manual efforts.

- Design Flexible Architectures: Opt for modular, metadata-driven designs that simplify updates and scale across pipelines.

- Tie Metrics to Outcomes: Translate technical quality metrics into business impacts to secure stakeholder buy-in.

- Pilot and Expand: Start with high-priority datasets, refine processes, and scale gradually across the organization.

Data Quality Impact: Key Statistics and Business Costs

Data Quality at Scale: Inside OpenMetadata's Framework with AI & Automation.

sbb-itb-61a6e59

Assess Business Needs and Define Data Quality Goals

Start by pinpointing the data that is most critical to your business—a skill emphasized in data engineering and AI training - things like revenue figures, conversion events, customer identifiers, and product catalogs. For example, a finance KPI table should emphasize reconciliation and timeliness, while an event log might tolerate minor duplicates as long as the schema remains stable.

Identify Key Data Quality Dimensions

Concentrate on six main dimensions: accuracy, completeness, timeliness, consistency, uniqueness, and validity. The priority of these dimensions depends on the context. For executive KPI dashboards, freshness and accuracy are essential. For revenue-related data, focus on validity and uniqueness. Meanwhile, for machine learning features, consistency and completeness are key.

A one-size-fits-all approach doesn’t work - Marketing, Finance, and Operations often have distinct needs. These differences call for localized control within a broader enterprise framework.

"Data is high quality when it is fit for its intended use and it reliably stays that way as pipelines evolve." - Coalesce

To prioritize effectively, consider the risks each dimension mitigates - whether they are related to decision-making, operations, or AI readiness. Use a tiered model to classify issues as Critical, Major, or Minor. This structure helps connect technical metrics to meaningful business outcomes.

Map Data Quality to Business Outcomes

The next step is to tie these quality dimensions directly to business results. Translate technical metrics into business language. For instance, instead of saying "10% null rate in email fields", explain the impact: "$X lost in marketing reach." This approach helps secure executive support and justifies investments.

Focus on 5–10 Critical Data Elements (CDEs) that are vital for high-impact processes like supply chain forecasting or financial reporting. If your goal is to reduce billing disputes, prioritize accuracy and consistency in customer address data. If improving on-time delivery rates is the aim, focus on timeliness and validity in shipment tracking records.

A real-world example: FedEx used real-time monitoring and feedback loops to enhance data quality across thousands of daily deliveries. By addressing address errors and data delays before they caused scheduling issues, FedEx boosted on-time delivery rates by 25%. Similarly, Vodafone tackled billing errors caused by inconsistent data entry. By deploying automated validation tools, they flagged incorrect billing and outdated customer data, cutting billing disputes by over 50%. These examples highlight how aligning data quality dimensions with specific goals can lead to measurable improvements.

Finally, assign Data Owners and Data Stewards to oversee relevant domains. Their role is to ensure that quality enhancements deliver the desired business results. Setting clear goals at this stage lays the foundation for designing an architecture that can scale these standards across your organization.

Design a Scalable Data Quality Architecture

Once your quality goals are tied to business outcomes, the next step is creating an architecture that ensures data quality at every stage. This is crucial, as poor-quality data can lead to revenue losses of up to 25%. A robust architecture embeds quality controls throughout the data lifecycle, minimizing these risks.

The architecture should include three key layers: detection, measurement, and reporting. The detection layer identifies issues, the measurement layer quantifies quality levels, and the reporting layer communicates the status through dashboards. These layers work seamlessly across the data lifecycle - from ingestion (validating schema conformity and record volumes) to transformation (enforcing business rules and deduplication) and finally to observability (tracking error rates and SLA breaches).

Use Modular and Decoupled Designs

Hardcoding quality checks directly into pipeline code can make updates cumbersome and error-prone. Instead, store rules in configuration files like YAML or JSON. This allows updates without requiring pipeline redeployment. A metadata-driven approach centralizes rule definitions, enabling them to be applied across multiple pipelines. This also empowers non-technical stakeholders to participate by using human-readable configuration files.

Decoupling these checks means they can run independently of data loading, preventing slowdowns in the pipeline. This method works particularly well in Medallion architectures on platforms like Databricks or Snowflake. For example, Bronze layers can allow more lenient validation (e.g., 80% completeness), while Gold layers enforce stricter standards (e.g., 99%+ completeness). A tiered configuration system - global rules, layer-specific defaults, and table-specific settings - ensures both consistency and flexibility.

| Feature | Hardcoded Checks | Decoupled Platform |

|---|---|---|

| Maintenance | Requires code changes and redeployment | Updated through configuration files or UIs |

| Stakeholders | Limited to data engineers | Accessible to data stewards and analysts |

| Scalability | Hard to manage across many tables | Scales easily with metadata-driven approaches |

| Performance | Can slow pipeline execution | Supports asynchronous validation |

By adopting decoupled designs, you can also introduce automated quality checks and real-time monitoring for a smoother workflow.

Add Automation and Real-Time Monitoring

To complement decoupled designs, integrate automation and real-time monitoring into your data quality processes. Manual checks are not scalable. As DataDef.io puts it, "The teams I've seen succeed treat data quality like unit tests in software: you don't ship code without tests, and you don't ship data without checks". Automation should begin at ingestion - use schema registries to block malformed data from entering the pipeline.

Modern tools can also leverage machine learning to detect anomalies in real time. These systems analyze historical patterns for metrics like data freshness, row counts, and distribution drift, catching unexpected issues that static rules might miss.

Real-time monitoring works best with tiered alerting to avoid overwhelming your team. Classify issues by severity - P1 (critical), P2 (major), or P3 (minor) - based on their business impact. Alerts can be integrated with tools like Slack, Jira, or PagerDuty for immediate action. Including remediation playbooks directly in alerts helps reduce mean time to resolution (MTTR). With automation in place, manual interventions decrease, and incidents become far less frequent.

Finally, store validation rules in version-controlled YAML files and incorporate data quality tests into your CI/CD pipeline. This ensures pull requests don’t introduce changes that break established quality standards, keeping quality gates intact.

These strategies establish a scalable foundation for data quality, setting the stage for the next steps in tool selection and rule automation.

Select and Integrate Scalable Tools

Picking the right tools for your data quality framework is critical. Poor data quality costs businesses an average of $12.9 million annually, often stemming from tools that can't scale or integrate well. Instead of focusing on the number of features, prioritize tools that are easy to use and fit seamlessly into your workflows. Here's how to identify the right tools and integrate them effectively.

Key Features to Look for in Tools

When building a scalable data quality framework, the tools you choose should support continuous quality checks. Focus on four essential areas: data profiling (understanding your data), monitoring and observability (keeping track of data health in real time), validation and testing (ensuring compliance with business rules), and data catalogs (providing lineage and context). This multi-layered "defense-in-depth" strategy offers more resilience than relying on a single platform.

Look for tools that support metadata-driven configuration. This means you can define validation rules in version-controlled, human-readable YAML files, allowing analysts to make updates without waiting for engineering teams. For instance, Snowflake's Data Metric Functions automatically check for issues like nulls and duplicates while also storing results for trend analysis. Similarly, Databricks provides metadata-driven validation frameworks that process large datasets as distributed Spark jobs, ensuring your pipelines remain efficient.

Scalability is another key factor. Integrated solutions can cut manual data cleaning by 60%-80% and reduce data incidents by 40%-70%. Choose platforms that separate compute from storage and work natively with your infrastructure - whether it's Spark for Databricks, Dataplex for BigQuery, or SQL-based checks for Snowflake.

Advanced capabilities like machine learning-based anomaly detection are becoming increasingly important. While static rules handle known issues, machine learning can help uncover unexpected problems. Integration with enterprise data catalogs such as Unity Catalog, Alation, or Collibra is also critical for tracking data lineage and performing root cause analysis when issues arise.

"The best tool is not the one with the most features; it is the one your team will actually use. Prioritize ease of use and deep integration into existing workflows to ensure adoption."

– Peter Korpak, Chief Analyst & Founder, DataEngineeringCompanies.com

Tool Integration Best Practices

To ensure your data quality tools work effectively across your data lifecycle, integration is key. Start with native connectivity. Choose tools that offer built-in connectors for platforms like Snowflake, Databricks, AWS, and your BI tools. Avoid relying on outdated APIs or third-party connectors, as these can lead to maintenance challenges and performance issues. For teams using Databricks, custom metadata-driven frameworks with PySpark often outperform external tools by leveraging Spark's native optimizations. Snowflake users can take advantage of features like "Not Null" constraints and zero-copy cloning, which allows testing new quality rules in a production-like environment without affecting live data or adding storage costs.

Store your quality rules in the same repository as your ETL/ELT scripts using version control. This "rules-as-code" approach integrates easily with CI/CD pipelines, enabling automated tests during pull requests and blocking changes that violate quality standards.

Set up quality checks to run immediately after pipeline completion instead of on a fixed schedule. This near-real-time validation helps prevent bad data from spreading downstream. Use Medallion-aware logic to enforce stricter quality standards as data moves through the layers - allowing for 80% completeness in raw (Bronze) layers but requiring 95% or higher in curated (Gold) layers.

Finally, automate your remediation workflows. When a quality check fails, the system should send alerts, assign ownership, provide a remediation playbook, and track resolution status. This reduces mean time to resolution (MTTR) and ensures accountability across teams.

For more hands-on experience, check out DataExpert.io Academy. Their boot camps cover platforms like Databricks, Snowflake, and AWS, providing real-world projects on building scalable data quality pipelines from scratch.

Build and Automate Data Quality Rules

With scalable tools in place, the next step is to define and automate data quality rules that align with your business goals. The key here is to create rules that drive meaningful improvements in the quality of your data.

Develop Weighted Scoring Models

Not all data errors are created equal. A missing customer ID, for instance, is far more critical than a missing middle name. Weighted scoring models address this by assigning varying levels of importance to different data elements based on their business impact.

To manage this effectively, categorize rules by their severity:

- Critical: Issues that block processing, like invalid financial transactions.

- Major: Errors that affect reliability, such as incomplete address fields.

- Minor: Issues that don’t disrupt operations but should be monitored for trends.

This system ensures your team focuses on the most pressing issues without being overwhelmed by alerts. Each rule should align with one of the six core data quality dimensions: Accuracy, Completeness, Consistency, Timeliness, Validity, and Uniqueness. For example:

- A completeness rule might require every lead record to include either an email or phone number.

- A validity rule could ensure U.S. zip codes are either 5 or 9 numeric digits.

General Motors has adopted a forward-thinking approach by treating datasets as formal data contracts that connect technical checks to business outcomes. Sherri Adame, Enterprise Data Governance Leader at GM, explains:

"By treating every dataset like an agreement between producers and consumers, GM is embedding trust and accountability into the fabric of its operations".

For flexibility and scalability, define rules using declarative frameworks like YAML or SQL-based tools (e.g., Soda Core or Dataplex). This keeps rules separate from application logic, making updates easier as your data environment evolves. Modern platforms even leverage machine learning to automate about 95% of standard rules, such as schema validation, null checks, and range thresholds. Manual effort is then reserved for more complex logic.

Once your weighted rules are ready, automate their enforcement to ensure consistent data quality.

Automate Rule Enforcement and Trend Analysis

Defining rules is only the first step - automation ensures they are applied consistently without manual intervention. By integrating these rules into your CI/CD pipelines, you can enforce data quality during every stage of development. For example:

- Run validation tests during pull requests to block changes that fail to meet standards before they go live.

- For streaming data, route invalid events to a "dead-letter" or quarantine topic for review, preventing bad data from corrupting downstream systems.

When deploying AI-generated rules, start in "shadow mode" to log violations without directly impacting operations. Once thresholds are fine-tuned and false positives minimized, transition to active enforcement.

Track the effectiveness of your rules over time by storing check results in time-series databases or tools like BigQuery. Use dashboards with clear green, yellow, and red indicators to make data health visible to non-technical stakeholders. Monitoring trends, such as a drop in completeness from 95% to 80%, can help you identify and address systemic issues early.

Another proactive measure is volumetric monitoring. By comparing current batch sizes to 30-day historical averages, you can catch silent pipeline failures where jobs appear successful but ingest incomplete data. This approach reduces risks and ensures reliable decisions, models, and customer interactions.

For hands-on guidance, DataExpert.io Academy offers boot camps on platforms like Databricks, Snowflake, and AWS, complete with real-world projects to help you build automated data quality pipelines.

Scale Data Quality Frameworks Across the Organization

Once automated and integrated quality checks are in place, the next step is to scale these efforts across the organization. This process isn’t a one-time event - it’s a phased journey that requires careful planning and execution to ensure success.

Pilot, Expand, and Industrialize

Begin by focusing on 10–20 high-priority assets - like executive dashboards, key financial tables, or customer-facing analytics - that offer clear and immediate value. This targeted approach demonstrates quick wins, helping to build trust among stakeholders and prove the framework's return on investment.

During the pilot phase, assign specific roles to each dataset: a technical owner, a domain owner, and a data steward. Use 30 days of historical data to establish thresholds for schema integrity, freshness, volume anomalies, and uniqueness. Classify issues by severity - Critical, Major, or Minor - to prioritize and address problems effectively.

Once the pilot proves successful, expand gradually to other areas. Document both successes and setbacks to refine your processes and improve your playbooks. As Peter Korpak highlights, a proactive approach to data quality reduces business risks and improves interactions across the board.

The final phase, industrialization, involves automating quality rule inheritance through data lineage. This ensures that checks created at upstream points automatically apply to downstream tables, reducing manual effort. By adopting this shift-left approach, you can catch data issues at the ingestion stage, preventing them from reaching production systems.

After automating rule inheritance, the focus shifts to managing the complexities of varied data formats and high-speed data streams.

Handle Data Variety and Velocity

As your framework grows, you’ll need to address challenges arising from diverse data formats and rapid data flows. From structured tables to semi-structured JSON APIs to unstructured logs, data can come in many forms. A connector ecosystem that integrates with SaaS tools, cloud warehouses, and streaming platforms ensures no data source is overlooked. Decoupling quality checks from hardcoded logic also provides flexibility as tools and sources evolve.

For high-speed streaming data - like Kafka topics - introduce real-time schema enforcement to ensure incoming messages meet structural expectations before entering your platform. Use stateful processing to identify duplicates or malformed data in the stream, and apply watermarks with latency thresholds to handle late-arriving or missing data before they disrupt analytics.

To avoid overwhelming teams with unnecessary alerts, implement tiered alerting. For example:

- P1 alerts for critical, revenue-impacting issues.

- P2 alerts for quality degradation.

- P3 alerts for informational updates.

Integrate these alerts with tools like Jira or Slack to assign clear ownership and track resolutions. For computationally heavy validations in streaming environments, use sampling techniques to maintain system performance and avoid bottlenecks.

Another effective strategy is adopting data contracts - machine-readable agreements between data producers and consumers that define schema and semantic rules. These contracts ensure consistency, prevent breaking changes, and set clear expectations between teams.

For those looking to develop these skills further, DataExpert.io Academy offers specialized training. Their courses cover scalable data quality frameworks using tools like Databricks, Snowflake, and AWS, complete with practical capstone projects to apply your learning in real-world scenarios.

Conclusion and Next Steps

Creating a scalable data quality framework isn’t just a technical endeavor - it’s a smart business move. Poor data quality can drain 15%–25% of operating budgets, with some organizations losing as much as $15 million annually because of it. Even more alarming, around 38% of AI projects fail due to subpar data quality.

Key Takeaways

The foundation of scalable data quality lies in aligning it with your business objectives. Focus on KPIs such as customer satisfaction, regulatory compliance, or AI model performance to ensure your framework drives measurable results. Jessica Sandifer, Tech Writer at DataGalaxy, explains it well:

"Cleansing data solves today's problems, but keeping it clean prevents tomorrow's problems."

Automation is another critical piece of the puzzle. Replace manual processes with modular, flexible architectures that can handle evolving data sources. Incorporate continuous monitoring systems that classify issues by severity - critical, major, or minor - so you can address them efficiently.

Don’t forget about accountability. Assign data stewards to oversee key domains, and start with high-impact pilot projects to test and refine your approach. By tying your efforts to business goals, automating workflows, and setting clear roles, you’ll establish a strong, scalable framework for maintaining data quality.

Learning Opportunities

Ready to take the next step? DataExpert.io Academy offers hands-on boot camps that teach you how to implement scalable data quality frameworks using platforms like Databricks, Snowflake, and AWS. Their programs feature real-world projects, guest speakers, and a community of data professionals to help you deepen your skills and apply them effectively.

FAQs

Which data should I quality-check first?

When it comes to data, it's crucial to focus on the areas that directly impact your organization's decisions and operations. Pay close attention to customer data, financial records, and key business metrics - errors here can result in costly mistakes and unnecessary risks.

It's also smart to validate data that feeds into downstream systems or dashboards as early as possible. Catching errors at this stage helps stop them from spreading further. Begin by checking the most critical data sources to ensure reliability where it counts the most.

How do I set pass/fail thresholds for data quality?

To establish pass/fail thresholds for data quality, start by defining clear validation rules. These might include acceptable limits for null values, accuracy percentages, or data completeness. Once these rules are set, integrate them into your tools or scripts to automate the validation process. Use dashboards or alerts to monitor the results in real time. If the data surpasses the set thresholds, it will trigger a fail status, helping you maintain consistent quality standards throughout your system.

How can I enforce data quality without slowing pipelines?

Incorporating automated data quality checks into your workflows can streamline processes and ensure consistent accuracy. Tools like Great Expectations or dbt-expectations are excellent for validating data efficiently without causing noticeable delays.

Automation frameworks such as Databricks DQX or Dataplex take it a step further by scheduling rule-based checks, monitoring data quality, and sending alerts when issues arise. These frameworks help maintain both accuracy and pipeline performance. Additionally, using AI-driven validation rules can minimize the need for manual upkeep as your systems grow, making scalability much easier to manage.