Databricks Projects for Data Engineer Portfolios

To stand out in today’s data engineering job market, you need more than just a list of skills. Employers want to see end-to-end, production-ready projects that demonstrate your ability to solve real-world problems. Tools like Databricks, Snowflake, and Apache Airflow are essential for building a portfolio that showcases your technical range and business impact.

Key Takeaways:

- Databricks: Ideal for large-scale data processing, machine learning, and real-time workflows using Spark and Delta Lake.

- Snowflake: Best for SQL-based ELT pipelines, business intelligence dashboards, and enterprise-level data warehousing.

- Apache Airflow: Highlights orchestration skills, Python-based automation, and multi-cloud workflow management.

Each tool has unique strengths:

- Databricks excels in AI/ML workflows and streaming.

- Snowflake simplifies SQL analytics and BI reporting.

- Airflow provides flexibility for orchestrating complex pipelines.

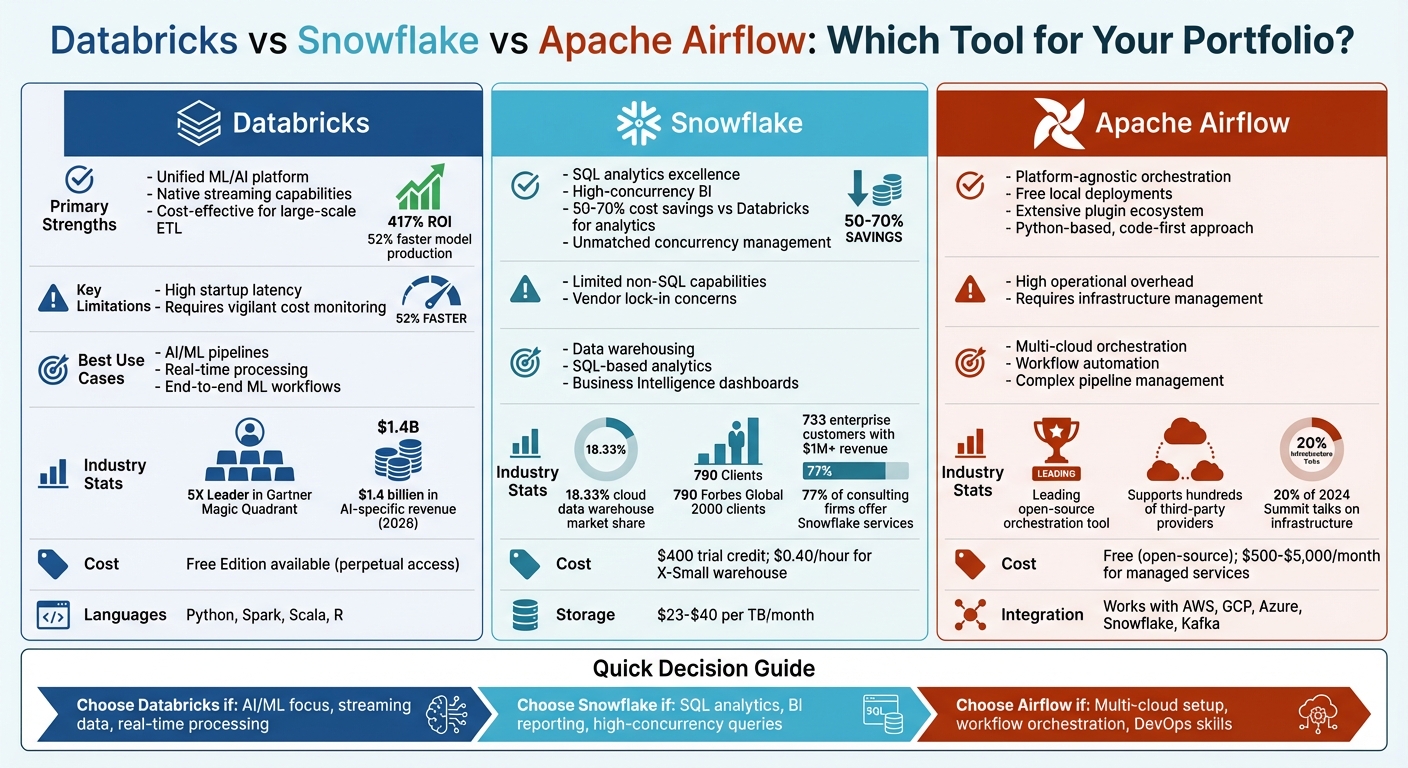

Quick Comparison

| Tool | Strengths | Limitations | Best Use Case |

|---|---|---|---|

| Databricks | Unified data processing and ML capabilities | Startup latency, cost monitoring needed | AI/ML pipelines, real-time processing |

| Snowflake | SQL analytics, high-concurrency BI | Limited non-SQL workflows, vendor lock-in | Data warehousing, SQL-based analytics |

| Airflow | Platform-agnostic orchestration | Requires infrastructure management | Workflow automation, multi-cloud setups |

By combining these tools in your portfolio, you can demonstrate expertise across data ingestion, transformation, analytics, and orchestration - skills highly valued in the industry.

Databricks vs Snowflake vs Apache Airflow: Data Engineering Tools Comparison

1. Databricks

Portfolio Project Complexity

Databricks projects give you the chance to create production-ready, end-to-end data systems. By implementing the Medallion Architecture - featuring Bronze (raw), Silver (cleaned), and Gold (aggregated) layers - you can demonstrate strong data quality management and governance practices. Designing Change Data Capture (CDC) workflows and building Slowly Changing Dimensions (SCD) Type‑2 loads with tools like Delta Live Tables and Autoloader showcases your ability to handle high-frequency data scenarios. Adding production-level features like automated retries and scheduled notifications further highlights your readiness for real-world challenges. These projects serve as a great way to showcase a comprehensive technical skill set.

Skill Demonstration

Working on Databricks projects highlights a wide range of technical skills, from SQL and Python to advanced Spark-based data processing. You can also integrate AI functionality directly into pipelines, using tools like ai_query() for sentiment analysis or AI_Forecast() for time-series modeling. This aligns with the growing industry focus on Generative AI and machine learning. Leveraging Unity Catalog for managing schemas and permissions demonstrates your ability to implement enterprise-level data governance, as well as make strategic architectural decisions. These projects not only refine your technical expertise but also show how you can address practical business challenges.

As Venkatesh Sharma, Enterprise Data Strategy Writer, explains, "Databricks alliances favor proactive partners who can run with deals, not just support them".

This reinforces the importance of showcasing not just the technical aspects of your work but also the reasoning behind your architectural choices and how they solve specific business problems.

Industry Adoption

Databricks’ strong industry reputation adds even more value to your portfolio. Recognized as a "5X Leader" in the Gartner Magic Quadrant for Cloud Database Management Systems, the platform is widely adopted for AI-driven data workflows and advanced analytics. This has created a growing demand for engineers skilled in navigating its ecosystem.

Pawan Shukla, Associate Consultant at Tata Consultancy Services, notes, "So many new things have been introduced in the last two years. I need to stay up‑to‑date on those new technologies to ensure my competence".

This underscores the importance of staying current with Databricks’ expanding capabilities to remain competitive in the job market.

Cost and Accessibility

The Databricks Free Edition eliminates cost barriers, making it easier to build portfolio projects. With perpetual access to the platform - no credit card or phone number required - you can experiment with tools like Delta Lake, create AI/BI dashboards, and run Spark jobs at no cost. Preloaded sample datasets and free access to the platform allow you to start building and experimenting right away. Additionally, training through Databricks Academy offers a structured data engineering bootcamp to validate your skills.

sbb-itb-61a6e59

2. Snowflake

Portfolio Project Complexity

Snowflake projects simplify operational tasks by automating key processes like query optimization, caching, and concurrency management. This lets you focus on crafting strong SQL-based ELT pipelines and building BI dashboards. A well-rounded Snowflake project should cover the entire ELT lifecycle - from extracting and transforming data to automating its loading. However, while Snowflake's proprietary storage format makes management easier, it may introduce vendor lock-in compared to open formats like Delta Lake or Parquet. This streamlined approach contrasts with platforms requiring more manual tuning, offering a chance to showcase specialized technical skills and earn data engineering certifications to validate your expertise.

Skill Demonstration

Snowflake projects allow you to demonstrate expertise in creating automated data workflows. For instance, you can highlight skills in building ETL/ELT workflows using Snowpark, which supports languages like Python, Java, and Scala. Additionally, leveraging features like Dynamic Tables for near-real-time analytics and Snowpipe for automated data loading can set your work apart. Migration projects, such as moving legacy data warehouses to Snowflake, can further showcase your ability to handle tasks like discovery, code conversion, data transfer, and validation. Sharing your work via GitHub repositories also emphasizes your commitment to professional version control practices.

Industry Adoption

Snowflake's strong market presence can significantly enhance your portfolio. As of January 31, 2026, Snowflake holds 18.33% of the cloud data warehousing market and serves 790 Forbes Global 2000 clients. Among them, 733 enterprise customers generate over $1 million in trailing 12-month product revenue - a 27% year-over-year growth. Furthermore, 77% of data engineering consulting firms offer Snowflake-specific services, while 74% of specialized consulting firms work with both Snowflake and Databricks.

As Peter Korpak, Chief Analyst & Founder at DataEngineeringCompanies.com, notes: "Snowflake is generally better for Business Intelligence. It offers unmatched concurrency management and out-of-the-box performance for SQL-based BI tools like Tableau and Power BI, without requiring extensive manual tuning."

Cost and Accessibility

Snowflake provides a $400 credit trial for new users, making it affordable to explore the platform. Compute costs are billed in Credits, typically ranging from $2 to $4 per credit depending on the edition and cloud region. For example, running an X-Small warehouse on AWS US East costs about $0.40 per hour, while storage costs range between $23 and $40 per terabyte per month for active data. Additionally, zero-copy cloning allows you to quickly set up development or testing environments without adding extra storage costs.

3. Apache Airflow

Portfolio Project Complexity

Adding Apache Airflow to your portfolio enhances your skill set alongside tools like Databricks and Snowflake. Airflow projects rely on a code-first approach, requiring you to define workflows as Directed Acyclic Graphs (DAGs) using Python. This involves coding every step of your data pipeline, from extraction to loading, giving you hands-on experience in orchestration. Unlike managed platforms, Airflow requires you to handle schedulers, worker nodes, and metadata databases, introducing operational challenges. These responsibilities highlight your abilities in infrastructure management and DevOps. In fact, about 20% of talks at the 2024 Airflow Summit focused on infrastructure and operational hurdles, emphasizing how these skills set seasoned engineers apart.

Skill Demonstration

Airflow's complexity offers plenty of chances to showcase your technical expertise. By working on Airflow projects, you can demonstrate Python orchestration skills and your ability to integrate with systems like Hadoop, Snowflake, and Redshift. Advanced techniques like managing task dependencies, implementing branching logic with @task.branch, and using dynamic task mapping with the expand() method can further underscore your expertise [17, 18, 21]. For example, in August 2023, George Yates, a Field Engineer at Astronomer, built a stock data ETL project that extracted data for companies like AAPL and FOX. Running locally on a MacBook via the Astro CLI, the pipeline achieved an average runtime of 8.66 seconds at no cost. Additionally, Airflow projects can highlight advanced capabilities, such as machine learning model training, deployment, and monitoring data quality and security.

Industry Adoption

Apache Airflow has become a leading open-source tool, especially in hybrid and multi-cloud setups, thanks to its platform-agnostic design. Its extensive community and ecosystem of plugins make it indispensable for managing complex, multi-vendor data environments. With support for hundreds of third-party providers, Airflow allows orchestration across platforms like AWS, GCP, Azure, and tools such as Snowflake and Kafka. Databricks has supported Airflow integration since 2017, offering operators like DatabricksSubmitRunOperator and DatabricksSqlOperator to trigger Databricks jobs and notebooks directly from Airflow DAGs.

Cost and Accessibility

Airflow’s open-source nature makes it accessible for portfolio projects, as it can run locally using Docker and the Astro CLI. While the software itself is free, production deployments often require additional investment in infrastructure and maintenance. Managed Airflow services typically range from $500 to $5,000 per month for small to medium-sized teams. For personal projects, the Astro CLI provides an easy way to set up a local environment, avoiding any cloud-related costs.

"Running on my MacBook, so it cost nothing to run, but if you wanted to run this in the cloud, you could do it on one node only running for 9 seconds for a pretty negligible cost."

– George Yates, Field Engineer, Astronomer

30-Day Databricks Portfolio Projects That Actually Gets You Hired

Advantages and Disadvantages

This section breaks down the key strengths and challenges of each tool, summarizing their capabilities and helping you decide which aligns best with your goals. Each platform has its own perks and drawbacks, making them suitable for different scenarios.

Databricks stands out as a comprehensive platform for data engineering, machine learning (ML), and streaming. Its open lakehouse design reduces dependency on specific vendors and supports multiple programming languages like Python, Spark, Scala, and R. The platform's industry adoption is evident - it generated $1.4 billion in AI-specific revenue as of early 2026. However, managing costs can be tricky. Leaving clusters running can quickly inflate expenses, and startup latency often delays notebook execution by several minutes.

"Remember to delete resources you're not using and check your accumulated bill regularly."

– George Michael Dagogo, Data Engineer

Snowflake, on the other hand, shines in SQL analytics and business intelligence (BI) reporting. It’s particularly cost-effective, delivering 50–70% savings compared to Databricks for high-concurrency query workloads. Its independent virtual warehouses handle thousands of concurrent users seamlessly, making it a solid choice for showcasing data warehousing expertise. That said, Snowflake's focus on SQL limits its ability to demonstrate complex data engineering workflows. Additionally, its proprietary storage model has raised concerns about vendor lock-in, though recent support for Apache Iceberg has addressed some of these issues.

Apache Airflow is the go-to tool for flexibility. As an open-source orchestration platform, it works across any cloud or platform. Its Python-based, code-first approach and vast plugin ecosystem - supporting hundreds of third-party providers - make it a valuable choice for those looking to highlight orchestration skills. You can deploy it locally for free using tools like Docker and Astro CLI, but production environments can cost anywhere from $500 to $5,000 per month. However, Airflow's flexibility comes with a tradeoff: operational complexity. Unlike managed solutions, you’ll need to handle schedulers, worker nodes, and metadata databases yourself.

| Tool | Primary Strengths | Key Limitations | Best Portfolio Use Case |

|---|---|---|---|

| Databricks | Unified ML/AI platform, native streaming, cost-effective for large-scale ETL | High startup latency; requires vigilant cost monitoring | End-to-end ML pipelines, real-time processing |

| Snowflake | SQL excellence, high-concurrency BI, cost savings for analytics | Limited non-SQL capabilities; vendor lock-in concerns | Data warehousing, SQL analytics |

| Airflow | Platform-agnostic, free local deployments, extensive integrations | High operational overhead; requires infrastructure management | Multi-cloud orchestration, workflow automation |

This table offers a quick snapshot of each tool's strengths and limitations, helping you match them to your project needs. Whether you're building ML pipelines, focusing on SQL analytics, or managing workflows across multiple platforms, there's a tool tailored to your goals.

"The choice hinges on a practical question: is your organization's center of gravity BI or AI?"

– Peter Korpak, Chief Analyst, DataEngineeringCompanies.com

Interestingly, many Fortune 500 companies adopt a hybrid strategy - using Databricks for heavy ETL and ML tasks, then exporting refined data to Snowflake for BI reporting. Your choice should reflect your career aspirations, whether in AI/ML, BI, or orchestration, to make your portfolio stand out.

Conclusion

Choosing the right tool for your data engineering portfolio depends on what you aim to showcase. Each platform offers distinct capabilities that can highlight specific skills and expertise.

Databricks stands out for unifying data ingestion, transformation, and streaming, making it ideal for projects involving complex AI and machine learning workflows. With a reported 417% ROI and a 52% faster model production rate, it’s a great choice for demonstrating advanced engineering skills, real-time data processing, or AI capabilities.

While Databricks shines in AI and ML, Snowflake and Apache Airflow play crucial roles in complementing the modern data stack. Snowflake excels in showcasing SQL proficiency, business intelligence reporting, and high-concurrency analytics. On the other hand, Airflow is perfect for demonstrating orchestration skills and managing intricate, multi-tool workflows.

"Databricks is where you build intelligence; Snowflake is where you operationalize it."

– Michael Speissbach, Manager of Data Architecture & Engineering, Datalere

A well-rounded portfolio should reflect a balanced use of these tools, tailored to enterprise needs. Highlight Databricks for machine learning and AI-focused roles, leverage Snowflake for analytics and BI, and showcase Airflow to demonstrate orchestration and DevOps expertise. By strategically curating projects that integrate these platforms, you showcase how modern data teams use the right tools for the right tasks, aligning your skills with current industry demands.

FAQs

What’s a strong Databricks portfolio project idea?

One impressive way to showcase your Databricks expertise is by creating an end-to-end declarative data pipeline. This type of project highlights your ability to design scalable systems, manage both batch and streaming data, and optimize workflows effectively.

For instance, you could develop a pipeline using Databricks' LakeFlow Spark Declarative Pipelines to enhance analytics for a ride-hailing company. This could include tasks like processing trip data, calculating driver performance metrics, and delivering real-time insights into customer demand.

To further demonstrate your range, consider adding other projects to your portfolio, such as building real-time streaming pipelines or conducting financial market analysis. These varied examples not only underline your technical skills but also show your adaptability with different data engineering tools and techniques.

How do I combine Databricks, Snowflake, and Airflow in one project?

To integrate Databricks, Snowflake, and Airflow effectively, you can use Airflow to manage and orchestrate workflows. Here's how it works:

- Airflow for Orchestration: Use Airflow DAGs (Directed Acyclic Graphs) to schedule and trigger Databricks jobs for data processing tasks.

- Databricks for Processing: Perform complex data transformations or machine learning tasks in Databricks.

- Snowflake for Storage and Queries: Once processing is complete, load the results into Snowflake or run queries directly on the processed data.

Additionally, you can utilize Databricks Connect to execute notebooks directly from Airflow. This setup combines Databricks' computational power, Snowflake's efficient storage and querying capabilities, and Airflow's scheduling and monitoring tools for a streamlined data pipeline.

How can I keep Databricks and Snowflake costs low for a portfolio?

To keep costs down with Databricks, make use of features like autoscaling, auto-termination, and carefully optimized cluster configurations that match your workload needs. For Snowflake, take advantage of auto-suspend and auto-resume settings, choose the right warehouse sizes, and keep a close eye on credit usage. Regularly reviewing workloads and scaling resources appropriately can help you avoid paying for idle capacity. These steps can help you manage expenses effectively without compromising performance.