Blog

Data Query Performance Analyzer

Boost your database speed with our free Data Query Performance Analyzer! Input your SQL query, get instant performance insights, and optimize effortlessly.

Data File Format Converter

Easily convert data files between CSV, JSON, XML, and Parquet with our free tool. Fast, secure, and client-side processing for your privacy!

Data Engineering Learning Path Planner

Build your personalized data engineering learning path with our free tool! Input your skills and goals to get a tailored roadmap with resources.

Data Pipeline Cost Calculator

Estimate data pipeline costs on AWS, Azure, or GCP with our free calculator. Get detailed breakdowns and save on cloud expenses today!

Data Engineering Skills Assessment

Think you're a data engineering pro? Take our free skills assessment to evaluate your expertise and get personalized feedback!

Complete Data Engineering Roadmap: SQL, Spark, Azure 2026

Discover the ultimate 2026 data engineering roadmap, covering SQL, Spark, Azure fundamentals, certifications, and hands-on projects to kickstart or advance your career.

Horizontal vs. Vertical Scalability in Analytics

Compare horizontal (scale-out) and vertical (scale-up) analytics strategies — benefits, costs, latency, fault tolerance, hybrid patterns, and when to switch.

Checklist for Building a Cloud Data Engineer Portfolio

Two to three production-ready cloud data projects beat dozens of tutorials for landing data engineering interviews.

Ultimate Guide to Stream Processing Frameworks

Compare Flink, Spark Structured Streaming, Kafka Streams, and Kinesis—learn latency, state management, time semantics, and how to choose the right framework.

Ultimate Guide to Behavioral Data Engineer Interviews

Behavioral interviews decide data engineer offers—use STAR, quantify impact, and prep stories on pipeline failures, prioritization, and stakeholder comms.

5 Tools To Showcase Data Engineering Skills

Learn how Airflow, AWS, Snowflake, dbt, and Spark projects can power a standout data engineering portfolio with real end-to-end workflows.

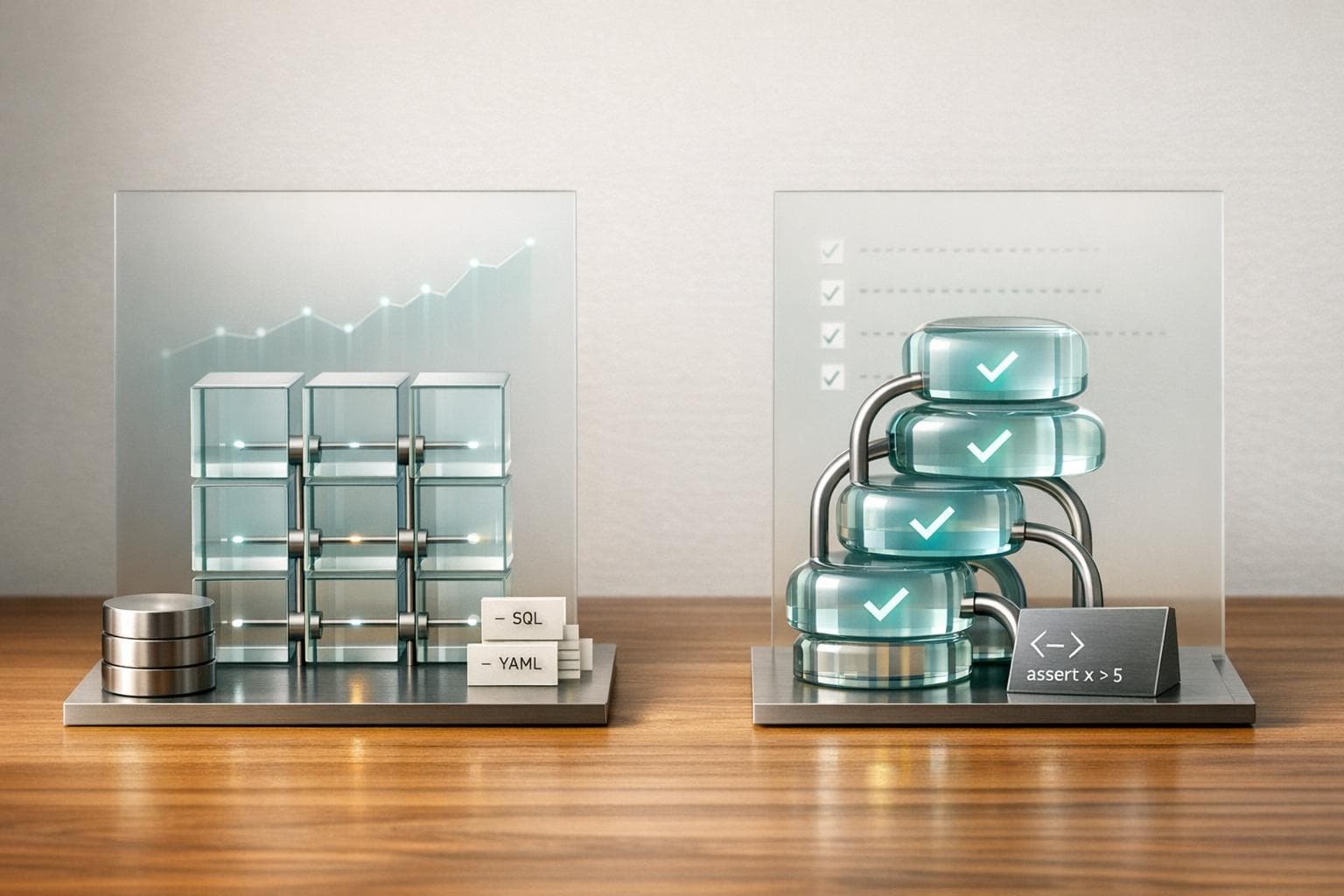

Soda vs. Great Expectations: Data Quality Tools

Compare Soda's SQL/YAML real-time monitoring and Great Expectations' Python validations to pick the best data quality tool for your team's workflow.