Blog

How to Build Scalable Data Quality Frameworks

Build a metadata-driven, automated data quality framework—prioritize critical data, automate validation, and monitor quality in real time.

Data Engineering Project Idea Generator

Struggling to find data engineering project ideas? Use our free tool to get tailored, innovative projects based on your skills and interests!

5 Steps to Automate Data Profiling in Snowflake

Automate Snowflake data profiling with DMFs, tasks, streams and Snowsight; define metrics, store results, and monitor anomalies and costs.

Unified Storage with Apache Iceberg: Future Trends

Iceberg unifies streaming and historical data with metadata-driven ACID tables, time travel, and AI-ready file formats.

Hive Query Optimization Questions Explained

Practical Hive optimization: partitioning, bucketing, compression, Tez, vectorized execution and CBO to speed queries and cut storage and compute costs.

dbt Core vs dbt Cloud: Key Differences

dbt Cloud reduces ops overhead while dbt Core gives full control—compare hosting, scheduling, security, onboarding, and real costs.

Databricks Logging: Setup and Tips

Configure Python or Log4j logging in Databricks, centralize JSON logs to Unity Catalog or cloud storage, set retention and integrate monitoring.

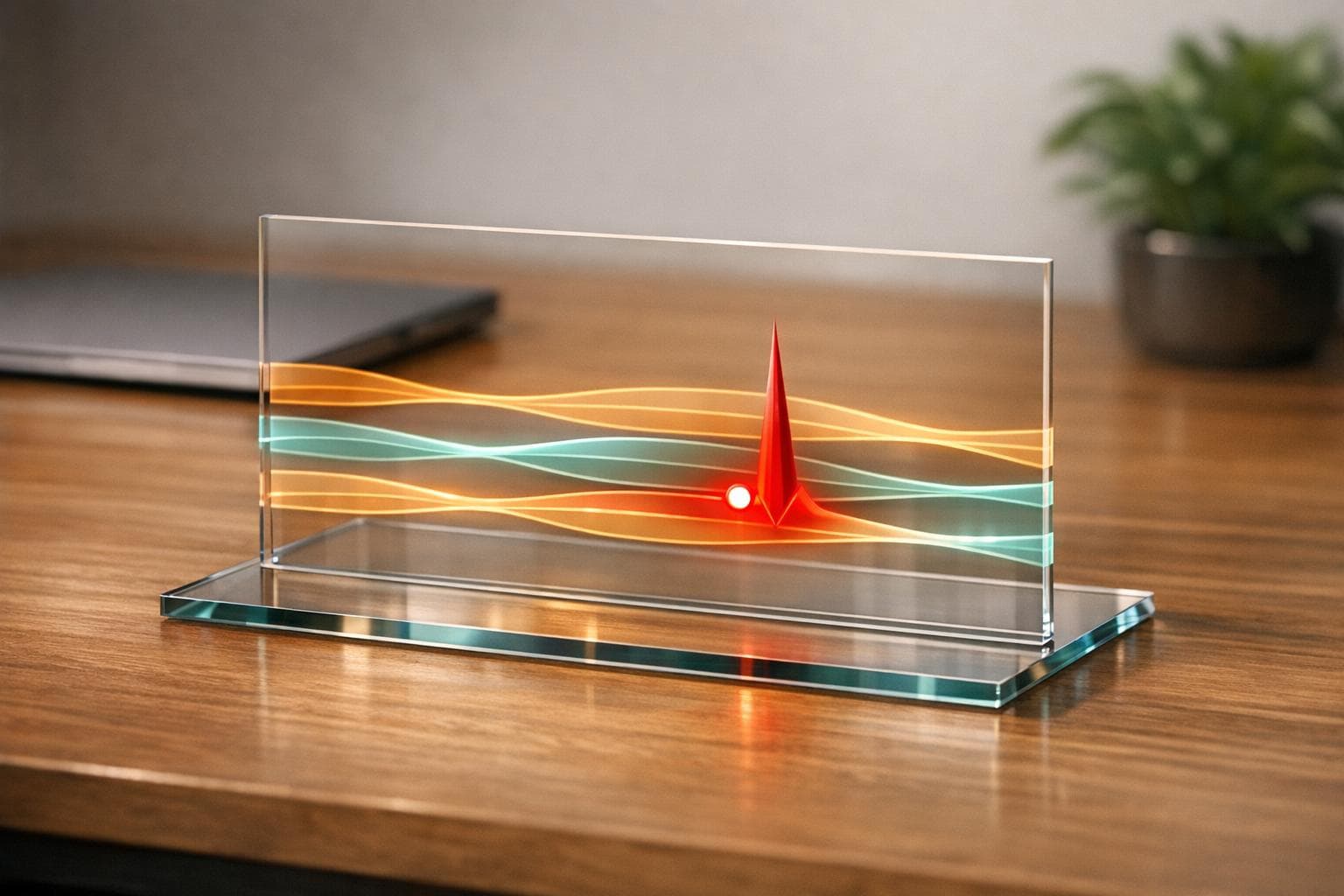

Structured Streaming for Live Video on Databricks

Build low-latency live video pipelines with a unified lakehouse streaming approach, efficient state stores, and medallion data layers.

Metadata-Driven Data Quality: How It Works

Use metadata, lineage, and AI to automate validation, catch errors early, and scale data quality across pipelines.

Databricks vs. Airflow for Event-Driven Workflows

Compare Databricks and Airflow for event-driven workflows—native triggers, Spark scaling, integration trade-offs, and cost differences.

Databricks Projects for Data Engineer Portfolios

Build end-to-end Databricks portfolio projects that integrate Snowflake and Airflow to showcase ML, ELT, and orchestration skills.

Databricks for Anomaly Detection in Data Pipelines

Build real-time anomaly detection pipelines in Databricks using Delta Live Tables, Unity Catalog, Isolation Forest models, and SQL alerts.