Analytics Engineering

33 articles tagged with "Analytics Engineering"

How Data Teams Drive Continuous Improvement

How data teams use audits, root-cause analysis, PDCA, feedback loops, agile methods and modern tools to improve data quality, reliability and delivery.

Access Control in Snowflake Migrations

Plan RBAC, enforce MFA, apply network and session policies, and monitor grants to secure Snowflake during and after migrations.

How Mentorship Boosts Data Career Growth

Mentorship helps data professionals learn tools faster, build soft skills, expand networks, and accelerate promotions with practical, real-world guidance.

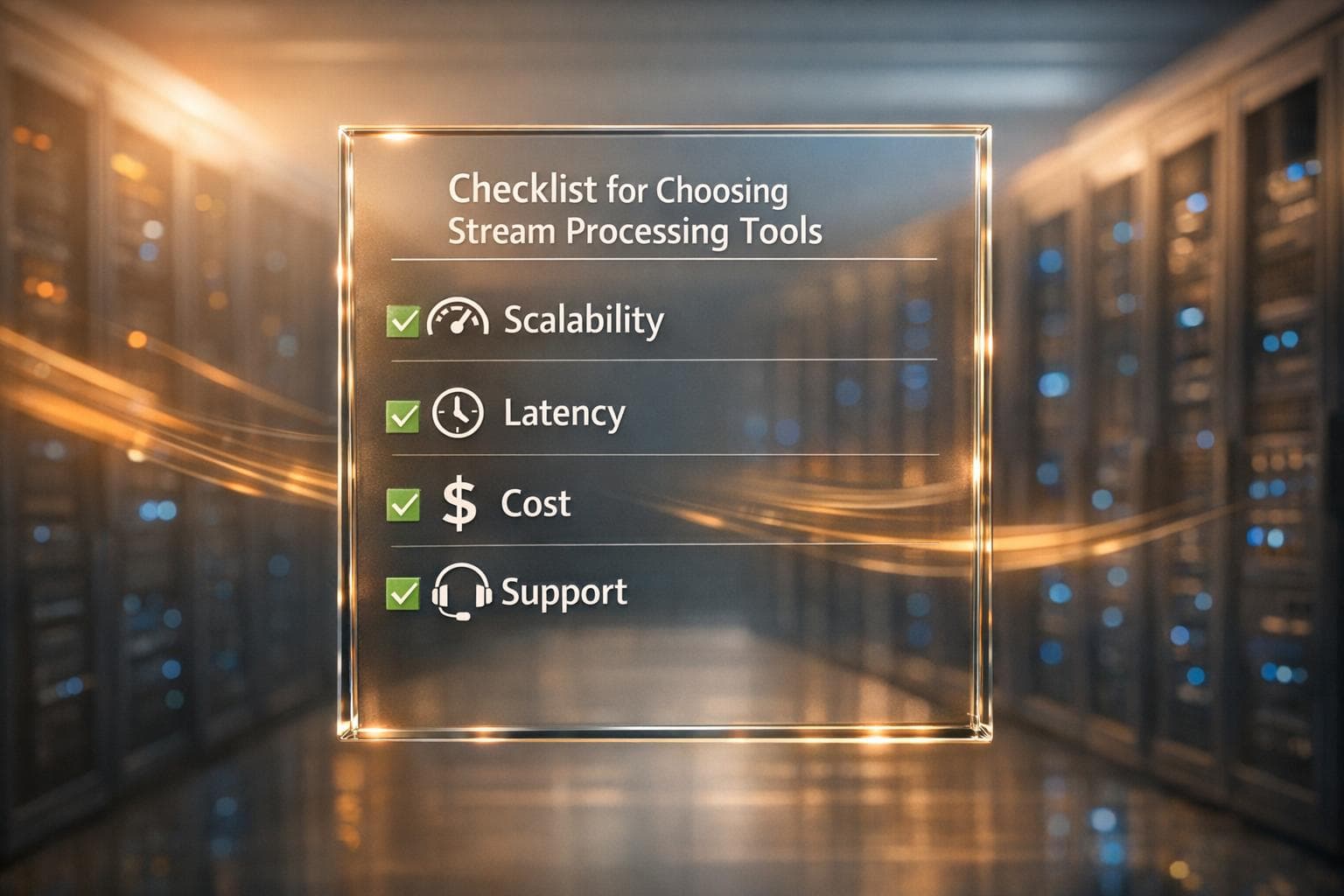

Checklist for Choosing Stream Processing Tools

A practical checklist for selecting stream processing tools based on scalability, latency, cost, and support.

Databricks for Financial Market Analysis

Use Databricks Lakehouse to combine real-time and historical market data, build streaming Delta pipelines, and train scalable predictive models.

Scaling with Databricks and Snowflake: Strategies

Compare horizontal vs vertical scaling for cloud data platforms, explore autoscaling policies, cost trade-offs, and hybrid best practices for performance and savings.

Polyglot Persistence: Database Per Service Pattern

How polyglot persistence and the database-per-service pattern let microservices pick optimal databases, scale independently, and manage consistency trade-offs.

Open Source ETL Tools: Comparison Guide 2026

Compare six open-source ETL tools—Airbyte, Airflow, NiFi, Pentaho, Meltano, and Talend (retired)—to find the best fit for scale, real-time needs, and team skills.

How to Optimize Query Concurrency in Snowflake

Reduce Snowflake query slowdowns by tuning MAX_CONCURRENCY_LEVEL, using auto-scaling, clustering keys, materialized views, and monitoring.

Error Handling in dbt: Best Practices

Practical dbt error-handling guide: diagnose compilation, model, and database errors; use tests, safe casts, macros, logs, and CI/CD to prevent failures.

Case Study: Optimizing Analytics with dbt and Snowflake

How dbt and Snowflake modernize analytics: three-layer pipelines, faster queries, lower costs, and AI-enabled features with real-world results.

10 Benefits of Domain-Oriented Data Architecture

Decentralized domain-oriented data architecture improves data quality, speed, scalability, governance, security, and sharing by treating data as products.