Data Engineering

57 articles in "Data Engineering"

dbt Core vs dbt Cloud: Key Differences

dbt Cloud reduces ops overhead while dbt Core gives full control—compare hosting, scheduling, security, onboarding, and real costs.

Databricks Logging: Setup and Tips

Configure Python or Log4j logging in Databricks, centralize JSON logs to Unity Catalog or cloud storage, set retention and integrate monitoring.

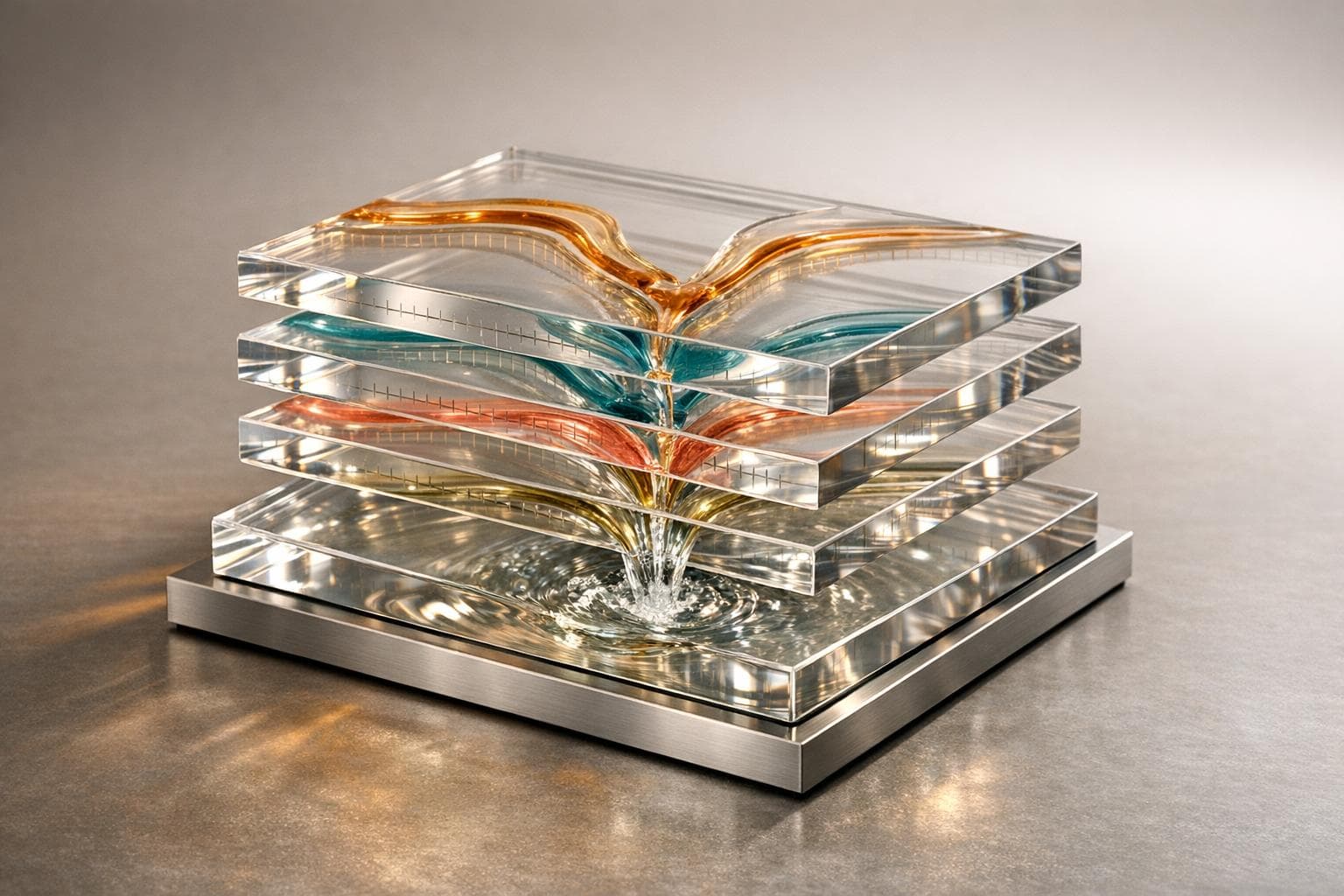

Structured Streaming for Live Video on Databricks

Build low-latency live video pipelines with a unified lakehouse streaming approach, efficient state stores, and medallion data layers.

Metadata-Driven Data Quality: How It Works

Use metadata, lineage, and AI to automate validation, catch errors early, and scale data quality across pipelines.

Databricks vs. Airflow for Event-Driven Workflows

Compare Databricks and Airflow for event-driven workflows—native triggers, Spark scaling, integration trade-offs, and cost differences.

Databricks Projects for Data Engineer Portfolios

Build end-to-end Databricks portfolio projects that integrate Snowflake and Airflow to showcase ML, ELT, and orchestration skills.

Databricks for Anomaly Detection in Data Pipelines

Build real-time anomaly detection pipelines in Databricks using Delta Live Tables, Unity Catalog, Isolation Forest models, and SQL alerts.

Horizontal vs. Vertical Scalability in Analytics

Compare horizontal (scale-out) and vertical (scale-up) analytics strategies — benefits, costs, latency, fault tolerance, hybrid patterns, and when to switch.

Checklist for Building a Cloud Data Engineer Portfolio

Two to three production-ready cloud data projects beat dozens of tutorials for landing data engineering interviews.

Ultimate Guide to Stream Processing Frameworks

Compare Flink, Spark Structured Streaming, Kafka Streams, and Kinesis—learn latency, state management, time semantics, and how to choose the right framework.

Ultimate Guide to Behavioral Data Engineer Interviews

Behavioral interviews decide data engineer offers—use STAR, quantify impact, and prep stories on pipeline failures, prioritization, and stakeholder comms.

5 Tools To Showcase Data Engineering Skills

Learn how Airflow, AWS, Snowflake, dbt, and Spark projects can power a standout data engineering portfolio with real end-to-end workflows.