Data Engineering

58 articles tagged with "Data Engineering"

How Databricks Handles Schema Transformations

Guide to schema enforcement, schema evolution, Auto Loader, mergeSchema, type widening, and streaming best practices in Databricks.

How to Optimize Query Concurrency in Snowflake

Reduce Snowflake query slowdowns by tuning MAX_CONCURRENCY_LEVEL, using auto-scaling, clustering keys, materialized views, and monitoring.

Error Handling in dbt: Best Practices

Practical dbt error-handling guide: diagnose compilation, model, and database errors; use tests, safe casts, macros, logs, and CI/CD to prevent failures.

Snowflake in Hybrid Cloud Data Architecture

Unify storage, compute, and governance across hybrid clouds using hybrid tables, micro-partitioning, secure cross-cloud sharing, and pay-per-use scaling.

Error Handling in Airflow with Python Pipelines

Reliable Airflow pipelines require intentional error handling: retries, idempotent tasks, targeted exceptions, alerts, and robust logging.

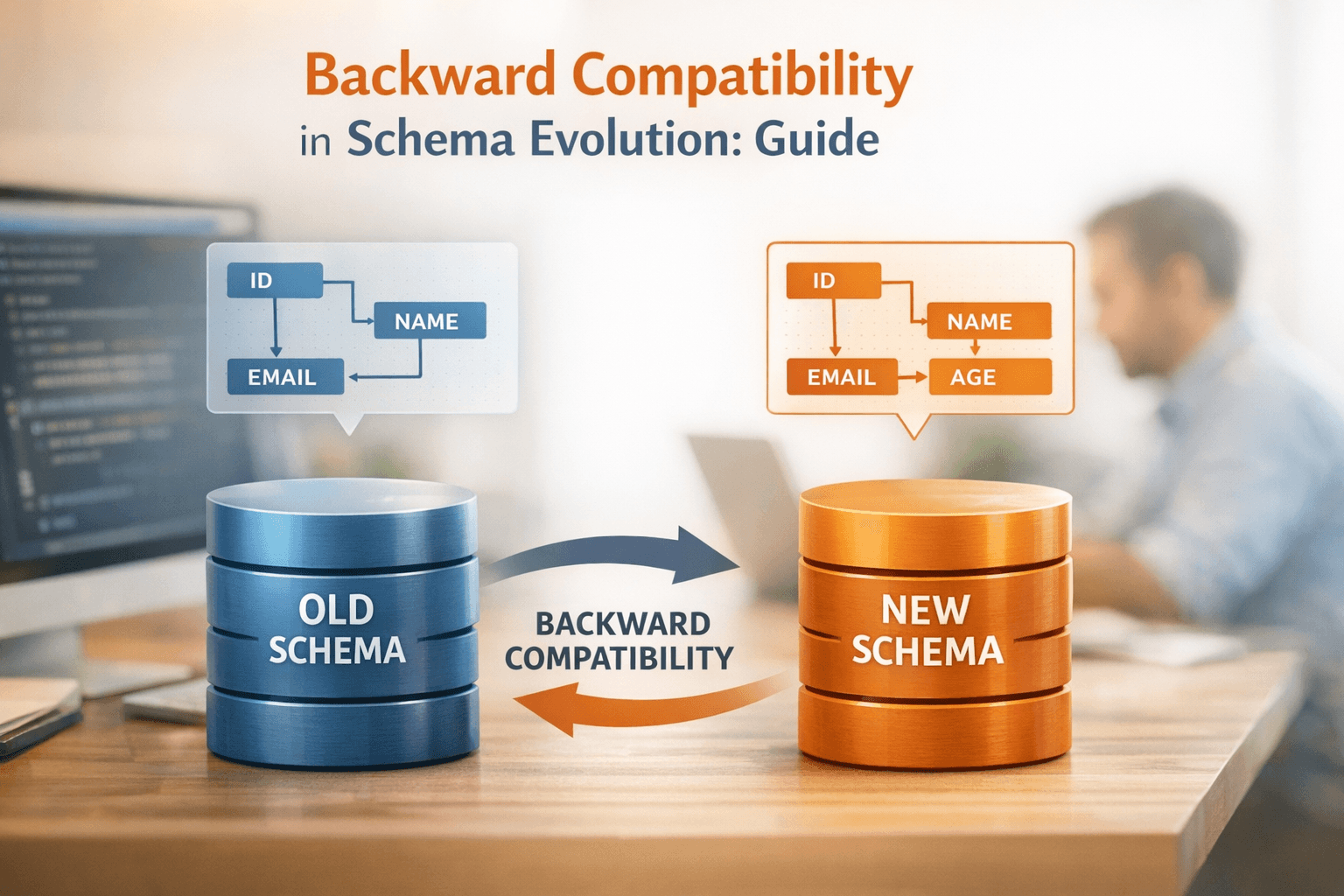

Backward Compatibility in Schema Evolution: Guide

Evolve schemas without breaking pipelines: learn safe changes, compatibility modes (BACKWARD vs BACKWARD_TRANSITIVE), registry best practices, and rollout tips.

Case Study: Optimizing Analytics with dbt and Snowflake

How dbt and Snowflake modernize analytics: three-layer pipelines, faster queries, lower costs, and AI-enabled features with real-world results.

10 Benefits of Domain-Oriented Data Architecture

Decentralized domain-oriented data architecture improves data quality, speed, scalability, governance, security, and sharing by treating data as products.

How Partitioning Impacts Query Performance

Table partitioning reduces data scanned, speeds queries, lowers cloud costs, and improves resource use - learn keys, sizes, and pruning best practices.

Kubernetes Best Practices for Data Teams

Kubernetes best practices for data teams: cluster setup, Spark/Airflow integration, resource requests, autoscaling, security, monitoring, GitOps, and cost.

How to Debug Airflow DAG Failures

Step-by-step checklist to diagnose and fix Airflow DAG failures: verify DAG import, inspect task logs, test with dag.test(), validate connections, and tune resources.

AWS vs Azure for Data Engineers: Tool Comparison

Compare AWS and Azure data engineering tools — storage, ETL, streaming, ML, and pricing — to choose the platform that fits your team's skills and infrastructure.