Cost Optimization

19 articles tagged with "Cost Optimization"

Databricks ETL Optimization for Petabyte Data

Guide to tuning Databricks for petabyte ETL: cluster sizing, Delta Lake layout, Auto Loader, AQE, and predictive optimization.

Case Study: Improving Dashboard Speed with Snowflake

Diagnose and fix Snowflake dashboard slowness with caching, warehouse tuning, clustering, materialized views and search optimization.

Snowflake Bottlenecks: Troubleshooting Tips

Query design, not warehouse size, is often the real reason Snowflake slows; profile queries, reduce I/O, optimize loads, and right-size resources.

Why dbt SQL Anti-Patterns Hurt Performance

Fix common dbt SQL anti-patterns—huge CTEs, missing staging, ephemeral overuse, and bad incremental filters—to cut costs and speed runs.

Ultimate Guide to Data Engineer Salary Negotiations

Neglecting salary negotiation can cost data engineers six figures—use market data, equity, and competing offers to secure fair pay.

5 Steps to Automate Data Profiling in Snowflake

Automate Snowflake data profiling with DMFs, tasks, streams and Snowsight; define metrics, store results, and monitor anomalies and costs.

Hive Query Optimization Questions Explained

Practical Hive optimization: partitioning, bucketing, compression, Tez, vectorized execution and CBO to speed queries and cut storage and compute costs.

dbt Core vs dbt Cloud: Key Differences

dbt Cloud reduces ops overhead while dbt Core gives full control—compare hosting, scheduling, security, onboarding, and real costs.

Databricks vs. Airflow for Event-Driven Workflows

Compare Databricks and Airflow for event-driven workflows—native triggers, Spark scaling, integration trade-offs, and cost differences.

Horizontal vs. Vertical Scalability in Analytics

Compare horizontal (scale-out) and vertical (scale-up) analytics strategies — benefits, costs, latency, fault tolerance, hybrid patterns, and when to switch.

Green Data Pipelines vs. Traditional Pipelines

Compare green and traditional data pipelines: energy use, cost savings, scalability, and techniques like lazy evaluation, sparse models, and carbon-aware scheduling.

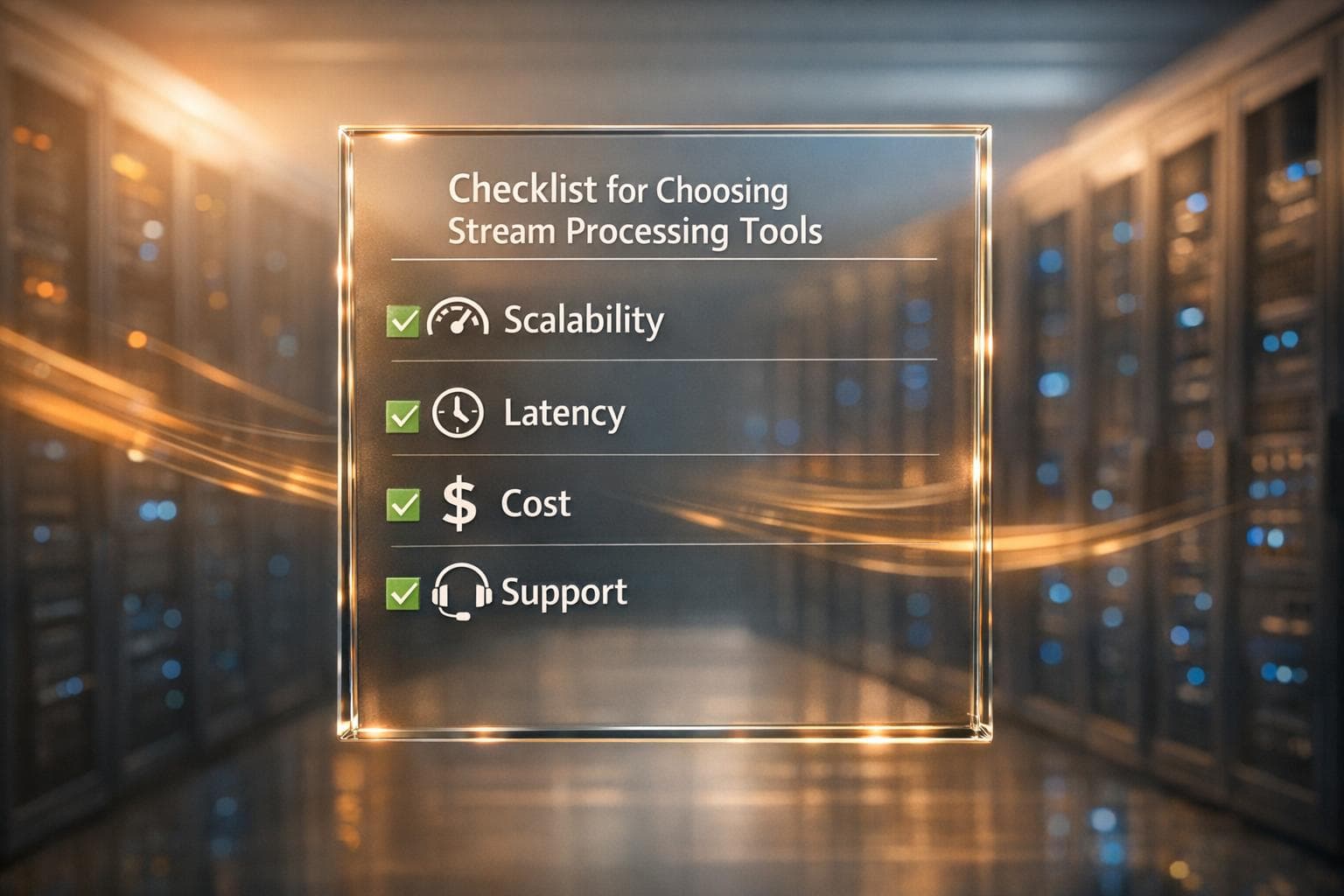

Checklist for Choosing Stream Processing Tools

A practical checklist for selecting stream processing tools based on scalability, latency, cost, and support.